The Agentic Pulse

What Types of AI Agents Are Being Adopted and What Are the Risks

Enterprises are deploying AI agents faster than they can secure them. The Agentic Pulse tracks how agentic systems are being adopted, and the identity and access risks emerging as these agents gain autonomy inside enterprise environments.

AI agents are rapidly moving from experimental chatbots to operational systems that execute tasks, access internal data, and interact with enterprise infrastructure.

But while organizations focus on productivity gains, most have little visibility into the identities, permissions, and actions of these agents.

The Agentic Pulse provides an inside look at how organizations are adopting AI agents today and how to prioritize security risk.

The Three Types of AI Agents in the Enterprise

Not all AI agents operate the same way. Based on how they interact with systems and workflows, most enterprise deployments fall into three categories.

As agents evolve across these categories, their autonomy, access patterns, and identities change dramatically, altering their risk profile.

The Most Common Entry Point for AI Agents

Agentic chatbots are extensions of traditional AI chat interfaces that users have granted access to organizational systems, services, and data. Token Security research has found that 49.8% of all chatbots are agentic chatbots. These agents typically operate within managed AI platforms and are triggered directly by user prompts. Agentic chatbots may retrieve internal documents (e.g., Google Docs, Microsoft 365), interact with your SaaS platforms (e.g., Salesforce, Snowflake, Monday.com), and perform limited workflow automation.

Characteristics

No-code, anyone can create them

Business users across sales, HR, marketing, and support can easily create agentic chatbots without involving engineering or security. The barrier to creating an agent with real system access has dropped to zero.

Built-in sharing makes them easy to distribute and hard to govern

Managed AI platforms are designed for collaboration. A chatbot's creator decides who gets access to it, effectively becoming the identity administrator for whatever credentials that chatbot holds.

Access is configured through static credentials or uploaded data

When chatbots connect to external systems, they typically use hardcoded API keys or OAuth tokens belonging to a dedicated (or shared) identity. Additional knowledge can be added by uploading files directly into the platform.

The Fastest-Growing and Least Governed AI Agents

Local agents run directly on employee endpoints and interact with systems using the user’s own permissions and network access. These agents are triggered through human interaction, but often execute multi-step tasks autonomously. Local AI agents can be used to read, search, and modify local files or codebases, execute terminal commands or scripts, interact with SaaS tools (e.g., GitHub, Slack, Notion, Jira), and query databases or APIs using the user’s credentials. Local agents can operate at various levels of autonomy and the complete range of access that the user has. They are the path of least resistance for high-access AI adoption: no provisioning, no IT tickets, no new identities.

Characteristics

Identity inheritance by design

Local agents do not have their own identities. They use the employee's credentials, network position, and system access. To the target systems, the agent is the user. This makes them easy to adopt and invisible to security teams.

Every employee becomes an identity administrator

The decisions about what access an agent gets and how autonomously it can act shifts from IAM and security teams to individual end users. A developer decides whether their agent can write to production databases. A marketing manager decides whether their agent can access the shared drive. These are access governance decisions being made by people who were never trained to make them and who are incentivized to prioritize productivity over security.

No fixed intent

Unlike purpose-built tools, local agents are general-purpose assistants. Their purpose changes with every prompt. This makes least-privilege access fundamentally harder. There is a direct correlation between how much access the agent has and how useful it is.

Expanding beyond technical users

Local agents are shifting from developer tools (coding assistants) to general-purpose workplace assistants for all employees. Non-technical users who do not understand how filesystems, networks, and IT processes work are now making security-critical configuration decisions.

The Most Powerful and Most Critical to Secure

Production agents are AI agents that are deployed as backend services inside cloud infrastructure. They are built by engineering teams, embedded directly into production workflows, and are often part of a product offering. These agents are embedded in production workflows: triaging production incidents, processing customer support tickets, automating expense approvals, and powering AI product features. Unlike the other two categories, production agents are often triggered by environmental events, webhooks, queue messages, and schedules, not by a human typing a prompt. These agents tend to operate with full levels of autonomy.

Even though the major cloud service providers (CSP) have developed semi-managed and managed offerings that are trying to compete with open-source (self-managed) alternatives like LangChain and LangGraph, 81% of CSP-deployed agents remain unmanaged. Furthermore, organizations that test the managed and semi-managed offerings have only 21% of those agents in production while the rest are in test environments.

Characteristics

Dedicated identities

Production agents are the only category that explicitly requires provisioned service identities and credentials. There is no human identity to inherit. This is architecturally better for security as identities can be scoped, rotated, and governed through standard processes. Production agents also introduce a new kind of identity sprawl for most organizations.

Triggered by the environment, not just by humans

These agents respond to webhooks, message queues, scheduled events, and API calls, including data from external, untrusted sources like customer messages, inbound emails, or form submissions. This makes them the category most exposed to prompt injection and adversarial input.

Agent-to-agent delegation

Production agents commonly orchestrate sub-agents or delegate tasks to other agents. This creates chains where original intent can drift, permissions can be implicitly shared, and trust relationships become complex enough that no single person fully understands them.

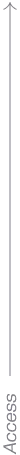

Access vs. Autonomy

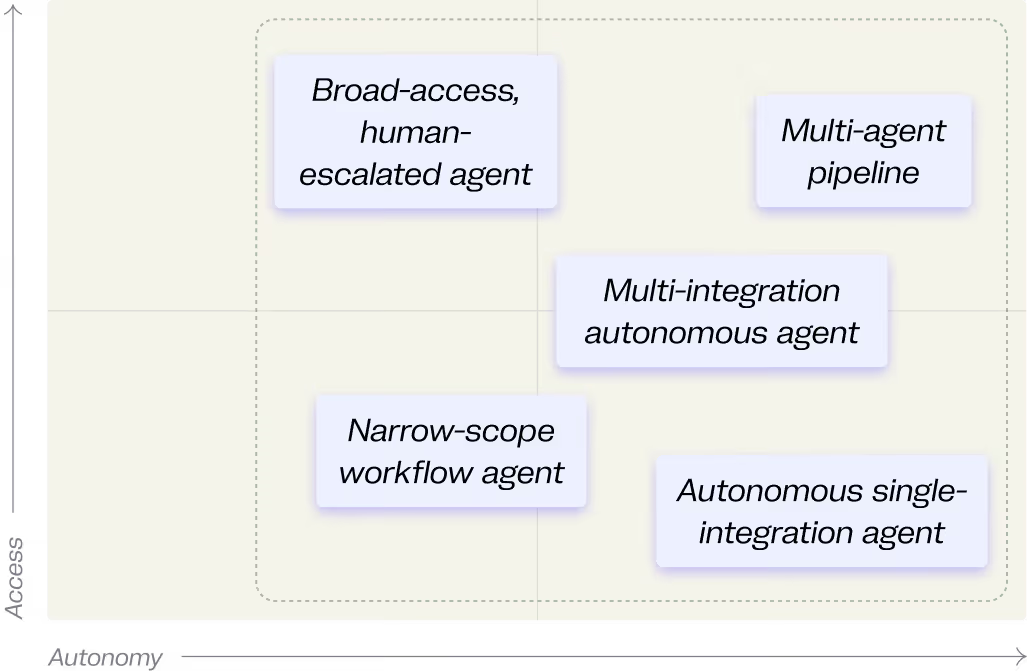

Agent Risk Is Driven by Two Independent Factors: Autonomy and System Access. Access can be thought of as the blast radius of a potential breach, while autonomy adds unpredictability and reduces human oversight. Together, these two factors determine the agent risk profile.

Access

The systems, APIs, data, and infrastructure an agent can interact with.

Autonomy

The degree to which an agent can make decisions and execute actions without human approval.

The AI Agent Risk Matrix

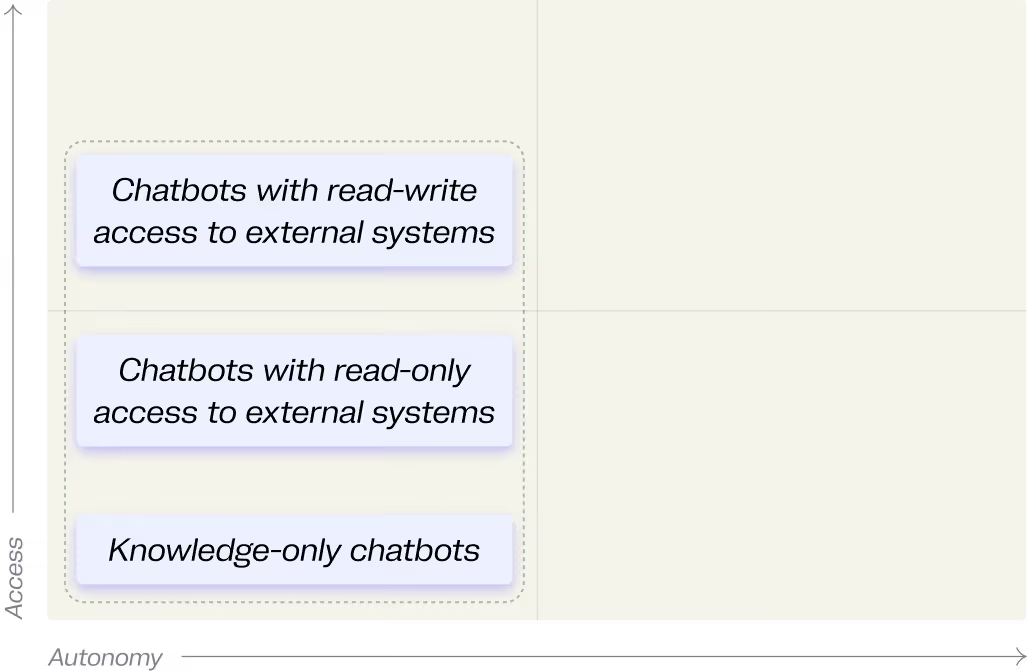

Security teams should evaluate AI agents using a simple framework: AI Agent Risk = Access × Autonomy. By mapping agents across autonomy and access, we can identify four distinct operational profiles.

Supervised

Power

Autonomous &

Empowered

Guided

Assistants

Bounded

Autonomy

Characteristics

- Connected to sensitive systems or data (credentials, APIs, databases)

- Human approval still required before execution

- Access tends to accumulate over time, rarely re-audited

Risk Profile

- Risk is in the configuration, not the runtime - human oversight doesn't protect against credential exposure, over-scoped permissions, or data leakage through the interface

- Configuration-time debt: high access was granted once and never revisited

.svg)

Characteristics

- Agent chains multiple tool calls and decisions within a single run without checkpoints

- Often processes external or untrusted input (webhooks, customer messages, emails)

- Errors compound across connected systems before detection is possible

Risk Profile

- Compound risk: configuration-time exposure + runtime independence

- A single injected prompt or misinterpreted intent can cascade across connected systems

- No single control is sufficient — requires least-privilege, guardrails, and observability together

.svg)

Characteristics

- Operates independently on defined workflows

- Limited integrations, narrow permissions

- High volume of autonomous decisions

Risk Profile

- Blast radius per incident is bounded by limited access

- Risk accumulates through frequency: many autonomous decisions, each with a non-zero error rate

- Primary threats are hallucination and misinterpreted intent, not access exploitation

.svg)

Characteristics

- Human triggers and approves each action

- Read-only or knowledge-only access

- Narrow, well-defined task scope

Risk Profile

- Lowest inherent risk

- Main residual risk: unintended data exposure through knowledge/RAG

- Agents gradually drift out of this quadrant as users add integrations and relax approvals

.svg)

The Unique Security Risks of Each AI Agent Type

Key Security Risks of Agentic Chatbots

Agentic chatbots are human-triggered and don't make decisions on their own, so they occupy the left side of the graph. The variation between them is almost entirely about access, from knowledge-only chatbots up to chatbots with read-write access to external systems.

Agentic chatbots also have a unique risk that resides outside this graph which is who has access to them. Anyone with access to a chatbot effectively inherits the access it has.

Even though they appear low-risk, chatbot agents introduce several security concerns.

Sharing is uncontrolled

Chatbots are often shared across teams without centralized governance, exposing sensitive data, access to services, and secrets leakage.

Inactive chatbots accumulate silently

These are agents that nobody is using, nobody is reviewing, and in some cases nobody owns, but that are still accessible to parts or all of the organization.

The creator becomes the access governor

When a sales manager builds a Custom GPT with an admin-level Salesforce API key and shares it with the entire sales organization, they've made an access governance decision by granting dozens of people access to a privileged identity outside any security review. The people using the chatbot may not even know what credentials power it.

Shared knowledge bypasses existing access controls

Uploading HR compensation data or competitive intelligence into a chatbot's knowledge base makes it queryable by anyone the chatbot is shared with regardless of whether they had access to the source files. The platform does not enforce the original document’s permissions.

Key Security Risks of Local Agents

Local agents are the most configurable with each user deciding independently how much access and autonomy their agent gets. As a result, local agents can occupy any point on the graph. The examples here range from a locked-down sandboxed code editor to a fully autonomous assistant with broad system access.

Instead of security teams determining access policies, every employee effectively becomes the identity administrator for their agent.

Agent actions can be indistinguishable from human actions

Because agents inherit their user’s identity, their actions show up in every target system's audit logs as normal human activity. Without endpoint-level instrumentation, distinguishing what the agent did from what the human did is often effectively impossible.

Invisible to security teams

Agent configurations live in scattered local files on individual endpoints. There is no centralized view of which agents exist, what access they've been given, and which MCP servers or plugins are installed.

Over-permissioning is the path of least resistance

Granting broad access makes the agent immediately more useful. Restricting access requires security judgment most users don't have. Approval fatigue accelerates this further. Users often resolve to auto-approving everything, defeating the one guardrail they have.

Supply chain risk is amplified

Users install MCP servers, plugins, and extensions from unvetted sources to extend their agent's capabilities. Unlike traditional software, much of this ecosystem is natural language instructions, which currently lacks mature security tooling, making it much easier for users to get compromised or accidentally install malicious instructions.

35.1% MCP servers are non-official (community or otherwise unknown), while 64.9% are official.

Key Security Risks of Production Agents

Production agents default to high autonomy as they are triggered by environmental events, not human prompts, and do not include human approval flows unless explicitly built in. Most sit on the right side of the graph. Their access level varies widely depending on purpose, from narrow single-integration agents to multi-agent pipelines with broad system access. Unlike the other two categories, production agents require their own dedicated identities as there is no human identity to inherit.

Production agents introduce risks similar to service identities in cloud infrastructure, but with autonomous decision-making capabilities. These agents can run continuously and react to environmental triggers with minimal human oversight.

Dedicated identity management is challenging

Just like any other NHI, identities created for production agents require proper lifecycle management, right-size permissions, credential management, etc.

Agent-to-Agent Communication and Delegation leads to complexity

Production agents may trigger other agents, which creates complex trust chains and identity delegation paths. When an orchestrator delegates to sub-agents sharing the same identity, every sub-agent inherits the full permission set.

Untrusted external input meets high autonomy

Production agents are often the only agents directly processing data from outside the organization, customer messages, webhook payloads, inbound emails. Combined with high autonomy and broad system access, a prompt injection in an incoming support ticket can cascade through connected systems before anyone notices.

Steps to Realize Immediate

AI Agent Security ROI

Remove unnecessary privileges from high-access agents

Limit high-access high-autonomy primitives for local agents (full local code execution, write access to prod, admin access to CSP, etc.)

Enforce only required access to medium-to-high-access agentic chatbots

Improve agent configuration posture (accurate intents, vetted tools, credential management, guardrail configurations)

Improve observability for medium to high autonomy agents, especially for agents processing untrusted input

How Token Security Helps CISOs

Prioritize AI Agent Security

Token Security is AI platform agnostic to continuously discover agents, map the identities they use, understand their intent and permissions, enforce access policies, and automate remediation across the enterprise. Security teams should focus on three foundational priorities.

Discover All AI Agents

Understand Agent Intent

.gif)